MENU

Starting a Business

- Best Small Business Loans

- Best Business Internet Service

- Best Online Payroll Service

- Best Business Phone Systems

Our Top Picks

- OnPay Payroll Review

- ADP Payroll Review

- Ooma Office Review

- RingCentral Review

Our In-Depth Reviews

Finance

- Best Accounting Software

- Best Merchant Services Providers

- Best Credit Card Processors

- Best Mobile Credit Card Processors

Our Top Picks

- Clover Review

- Merchant One Review

- QuickBooks Online Review

- Xero Accounting Review

Our In-Depth Reviews

- Accounting

- Finances

- Financial Solutions

- Funding

Explore More

Human Resources

- Best Human Resources Outsourcing Services

- Best Time and Attendance Software

- Best PEO Services

- Best Business Employee Retirement Plans

Our Top Picks

- Bambee Review

- Rippling HR Software Review

- TriNet Review

- Gusto Payroll Review

Our In-Depth Reviews

- Employees

- HR Solutions

- Hiring

- Managing

Explore More

Marketing and Sales

- Best Text Message Marketing Services

- Best CRM Software

- Best Email Marketing Services

- Best Website Builders

Our Top Picks

- Textedly Review

- Salesforce Review

- EZ Texting Review

- Textline Review

Our In-Depth Reviews

Technology

- Best GPS Fleet Management Software

- Best POS Systems

- Best Employee Monitoring Software

- Best Document Management Software

Our Top Picks

- Verizon Connect Fleet GPS Review

- Zoom Review

- Samsara Review

- Zoho CRM Review

Our In-Depth Reviews

Business Basics

- 4 Simple Steps to Valuing Your Small Business

- How to Write a Business Growth Plan

- 12 Business Skills You Need to Master

- How to Start a One-Person Business

Our Top Picks

How to Create a Web Scraping Tool in PowerShell

Table of Contents

Web scraping tools are useful for gathering data from various webpages. For example, price comparison sites that share the best deals usually grab their information from specific feeds e-tailers set up for that purpose. However, not all online sellers make price feeds available. In these instances, comparison sites can use web scraping to grab the information they need.

Because website design varies and websites all have unique structures, you must create customized scrapers. Luckily, scripting languages like PowerShell help you build reliable web scraping tools. Use PowerShell modules to extract the information you need.

Keep an eye on your competitors’ prices by creating a web scraper and using Windows Task Scheduler to monitor prices once daily. To run your scraper as part of a web application, host it on an IIS server and manage it with IIS application pools.

Web scraping explained

Web scraping is the art of parsing an HTML webpage and gathering elements in a structured manner. Because HTML pages have specific structures, it’s possible to parse through them and retrieve semi-structured output. Note the use of the qualifier “semi.” Most pages aren’t perfectly formatted behind the scenes and may hold website design mistakes, so your output may not be perfectly structured.

Still, scripting languages like Microsoft PowerShell – along with a little ingenuity and some trial and error – help you build reliable web scraping tools to pull information from many different webpages.

It’s important to remember that webpage structures vary wildly. If even a small element is changed, your web scraping tool may no longer work. Focus on the basics first and then build more specific tools for particular webpages.

Web scraping can greatly enhance your marketplace knowledge. However, you may want to consult a business lawyer about the legalities of scraping specific sites before you get started.

How to create a web scraping tool in PowerShell

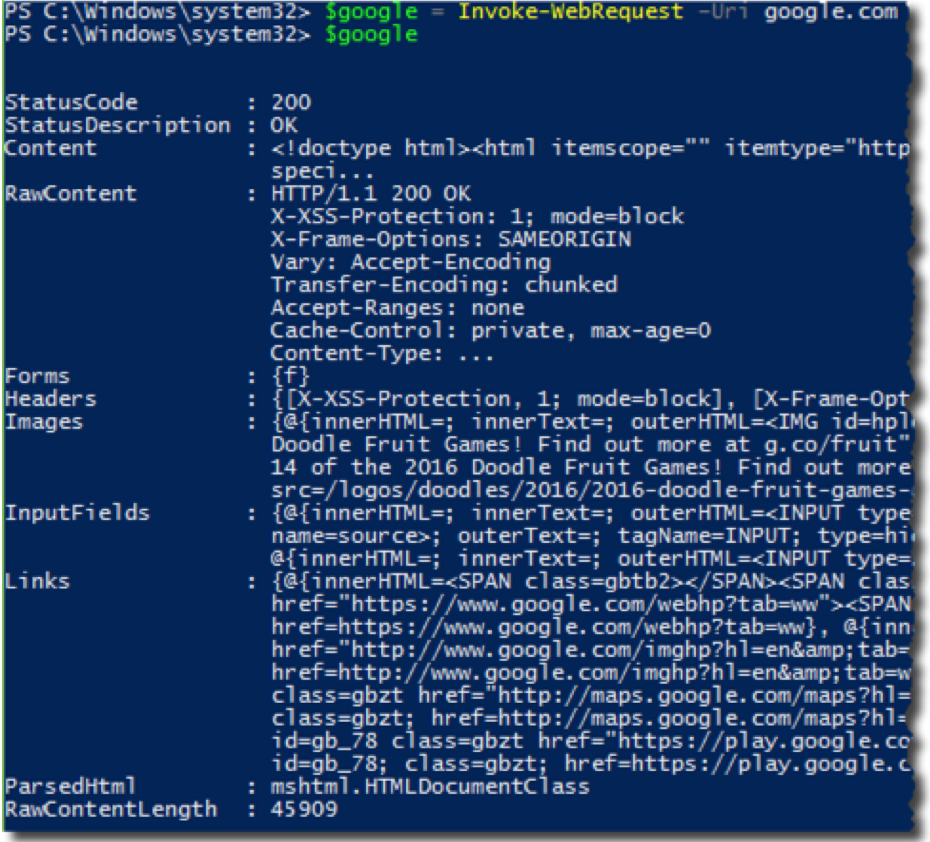

The command of choice is Invoke-WebRequest. This command should be a staple in your web scraping arsenal. It simplifies pulling down webpage data and allows you to focus on parsing the data you need.

1. See how a web scraping tool views Google.

To get started, let’s use a simple web page everyone is familiar with — Google.com — and see how a web scraping tool views it.

First, pass Google.com to the Uri parameter of Invoke-WebRequest and inspect the output.

$google = Invoke-WebRequest –Uri google.com

This is a representation of the entire Google.com page, all wrapped up in an object for you.

The Invoke-WebRequest command is highly versatile. It works on FTP and HTTP sites, which gives you more choices on where to source information and data.

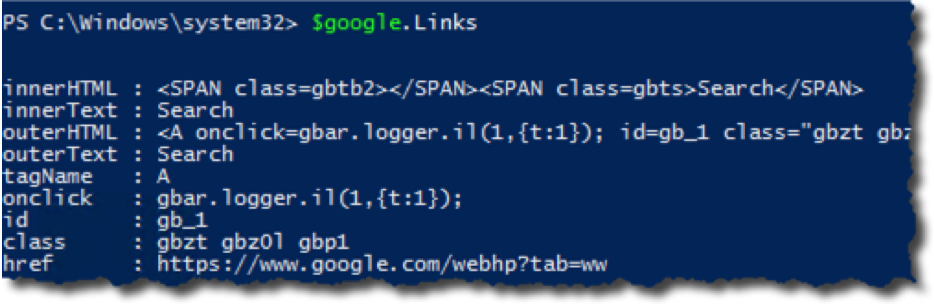

2. Pull information from the webpage.

Now, let’s see what information you can pull from this webpage. For example, say you need to find all the links on the page. To do this, you’d reference the Links property. This will enumerate various properties of each link on the page.

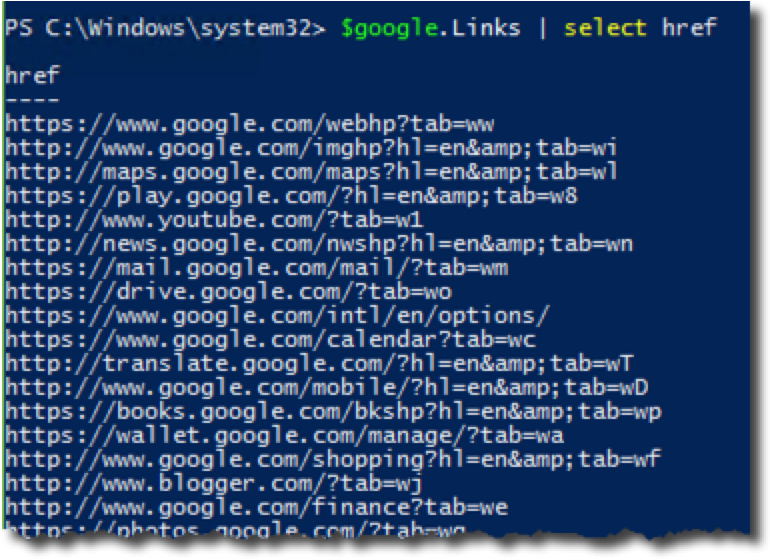

Perhaps you just want to see the URL that it links to:

How about the anchor text and the URL? Since this is just an object, it’s easy to pull information like this:

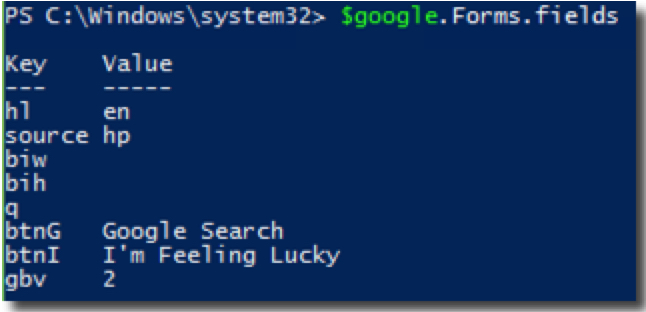

You can also see what the infamous Google.com form with the input box looks like under the hood:

If your scraper stops working, the website structure has likely changed. Unfortunately, you’ll have to build a new web scraper.

How to download the information you’ve scraped

Let’s take this one step further and download information from a webpage. For example, perhaps you want to download all images on the page. To do this, we’ll also use the –UseBasicParsing parameter. This command is faster because Invoke-WebRequest doesn’t crawl the DOM.

1. Download images from the webpage.

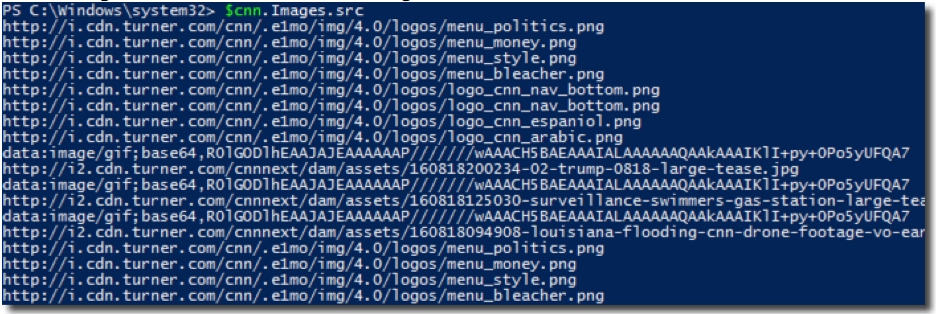

For another example, here’s how to use PowerShell to enumerate all images on the CNN.com website and download them to your local computer.

$cnn = Invoke-WebRequest –Uri cnn.com –UseBasicParsing

2. Find the images’ URL hosts.

Now let’s figure out each URL that the image is hosted on.

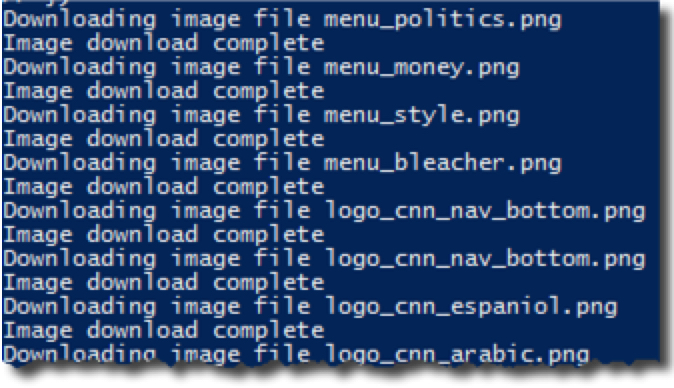

3. Download the images.

Once you have the URLs, all you need to do is use Invoke-Request again. However, this time, you’ll use the –OutFile parameter to send the response to a file.

@($cnn.Images.src).foreach({

$fileName = $_ | Split-Path -Leaf

Write-Host “Downloading image file $fileName”

Invoke-WebRequest -Uri $_ -OutFile “C:$fileName”

Write-Host ‘Image download complete’

})

In this case, you saved the images directly to my C: — but you can easily change this location to a different one. With PowerShell’s ability to manage file system ACLs, you get the freedom to save images to your directory of choice.

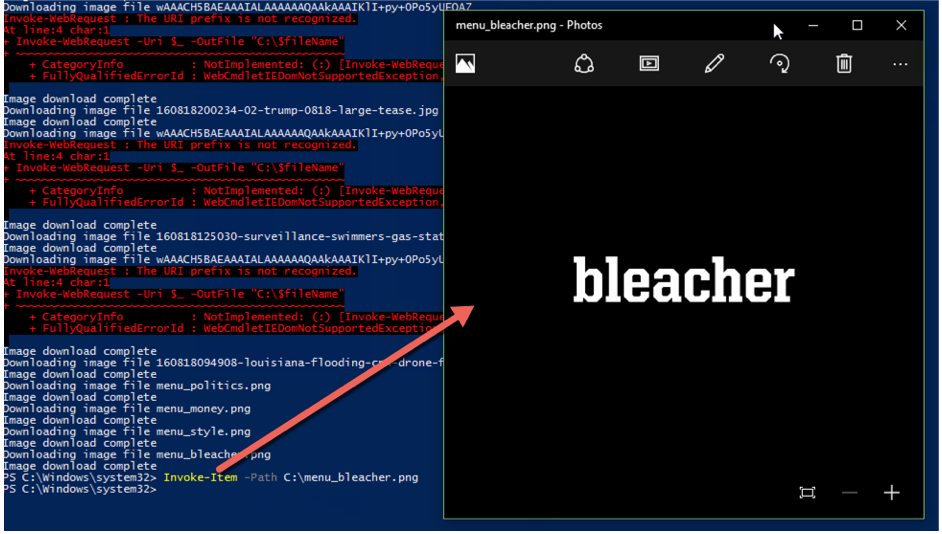

4. Test the images from PowerShell.

If you’d like to test the images directly from PowerShell, use the Invoke-Item command to pull up the image’s associated viewer. You can see below that Invoke-WebRequest pulled down an image from CNN.com with the word “bleacher.”

Building your own web scraping tool is straightforward

Use the code in this article as a template to build your own tool. For example, you could build a PowerShell function called Invoke-WebScrape with a few parameters like –Url or –Links. Once you have the basics down, you can easily create a customized tool to apply in many different ways.

Mark Fairlie contributed to this article.